PHP's command line interface doesn't respect the max_execution_time limit within your php.ini settings. This can be both a blessing and a curse (but more often the latter). There are some drush scripts that I run concurrently for batch operations that I want to make sure don't run away from me, because they perform database operations and network calls, and can sometimes slow down and block other operations.

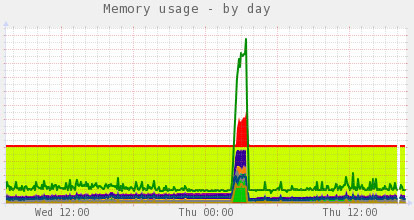

Can you tell when the batch got backlogged? CPU usage spiked to 20, and threads went from 100 to 400.

I found that some large batch operations (where there are hundreds of thousands of items to work on) would hold the server hostage and cause a major slowdown, and when I went to the command line and ran:

$ drush @site-alias ev "print ini_get('max_execution_time');"I found that the execution time was set to 0. Looking in PHP's Docs for max_execution_time, I found that this is by design:

This sets the maximum time in seconds a script is allowed to run before it is terminated by the parser. This helps prevent poorly written scripts from tying up the server. The default setting is 30. When running PHP from the command line the default setting is 0.

I couldn't set a max_execution_time for the CLI in php.ini, unfortunately, so I simply added the following to my site's settings.php:

<?php

ini_set('max_execution_time', 180); // Set max execution time explicitly.

?>This sets the execution time explicitly whenever drush bootstraps drupal. And now, in my drush-powered function, I can check for the max_execution_time and use that as a baseline to measure against whether I should continue processing the batch or stop. I need to do this since I have drush run a bunch of concurrent threads for this particular batch process (and it continues all day every day).

Now the server is much happier, since I don't get hundreds of threads that end up locking the MySQL server during huge operations. I can set drush to only run every 3 minutes, and it will only create a few threads that die around the next time another drush operation is called.

Comments

I use drush scripts for one-off operations like migrating or converting data. Large database operations via Drush do cause the type of problems you describe. The Queue API might be what you're looking after:

http://api.drupal.org/api/drupal/modules%21system%21system.queue.inc/gr…

It goes via cron (which essentially runs PHP also in CLI mode) but has some nice features like locking, reliable processing, etc. If you process 10.000 items, the API can split those up into nice sections of x items which get processed in batches every cron run.

I use the queue API for a few processes, but for this process in particular, I need fine-grained control over when the job is run and like having a dedicated process I can monitor, and be sure that nothing else is running on the same thread (with cron, other cron jobs could run and compete for resources at the same time, even with something like Elysia Cron).

Not to say queue API is bad or anything; for almost any continuous batch process, it's a very good option.