One Drupal site I manage has seen MySQL data throughput numbers rising constantly for the past year or so, and the site's page generation times have become progressively slower. After profiling the code with XHProf and monitoring query times on a staging server using Devel's query log, I found that there were a few queries that were running on pretty much every page load, grabbing data from cache tables with 5-10 MB in certain rows.

The two main culprits were cache_views and cache_field. These two tables alone contained more than 16MB of data, which was queried on almost every page request. There's an issue on drupal.org (_field_info_collate_fields() memory usage) to address the poor performance of field info caching for sites with more than a few fields, but I haven't found anything about better views caching strategies.

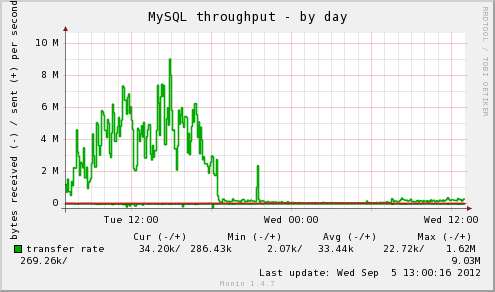

Knowing that these two tables, along with the system cache table, were queried on almost every page request, I decided I needed a way to cache the data so MySQL didn't have to spend so much time passing the cached data back to Drupal. Can you guess, in the following graph, when I started caching these things?

APC, Memcached, MySQL Query Cache?

If this site were running on multiple servers, or had a bit more infrastructure behind it, I would consider using memcached, which is a great caching system to run in front of MySQL, especially if you want to cache a ton of things and have a scalable caching solution (read this story for more). Running on one server, though, memcached doesn't offer a huge benefit compared to just using MySQL's query cache and tuning the innodb_buffer_pool_size so more queries come directly from memory. Memcached incurs a slight overhead due to the fact that data is transferred over a TCP socket (even if it's running on localhost).

MySQL's query cache is nice, but doesn't offer a huge speed benefit compared to how much more memory it needs to store a lot of queries.

I've often used APC (an opcode cache for PHP) to cache all a site's compiled PHP files in memory so they don't need to be re-read and compiled from disk on every page request (for most Drupal sites, if you're not already using APC for this purpose, you should be; unless you're using fast SSDs or a super-fast RAID array (and even in that case), APC will give probably a 20-50% gain in page load times).

However, I've never used APCs 'user cache' before, since I normally let APC run and don't want to worry about fragmentation or purging.

APC User Cache

There's a handy Drupal module, APC, which lets you configure Drupal to store certain caches in APC instead of in the database, meaning Drupal can read certain caches directly from RAM, in a highly-optimized key-value cache. APC caching is suited best for caches that don't change frequently (otherwise, you could slow things down due to frequent purging and fragmentation).

Some good candidates I've found include:

cache(includes entity_info, filter_formats, image_styles, and the theme_registry, many of which are queried every page load).cache_bootstrap(includes system_list and variables, queried every page load).cache_field(queried whenever field data is needed, grows proportionally to how many fields + instances you have).cache_views(queried whenever a view is loaded—even if your views are all stored in code).

You may find some other caches that are suitable for APC, but when you've decided which caches you'd like in APC, count up the data sizes of all the tables after the cache is warm, and then double that value. This is how many MB you should add to your existing apc.shm_size variable (usually in apc.ini somewhere on your server) to give a good overhead for user cache objects.

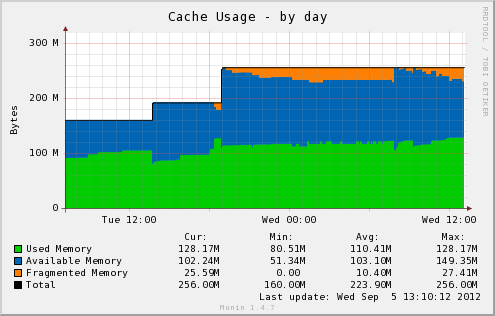

Monitor the APC cache size and usage (especially the free space and fragmentation amounts) using either the apc.php file included with APC (instructions), or using something like munin-php-apc along with munin monitoring. Make sure you have a good ratio of available vs. fragmented memory (more blue than orange, in the graph below):

When NOT to Use APC

APC is awesome for single-server setups. Especially if you have a site with relatively steady traffic, growing organically. APC is NOT helpful when you know you're going to need to scale quickly and will be adding servers (APC only benefits the server on which it's running). For a site that will exceed it's current capacity quickly, you'll probably want to consider first splitting your web server (Apache/PHP) from your MySQL server (but put them both in the same datacenter and connect via a private network), then consider adding a memcached server between the web and database server. From there, you can start adding more memcached servers and database slave servers as needed.

APC is also not very helpful if you don't have enough RAM on your server to store the cached objects (opcode + user cache objects) with at least 20-40% overhead (free space). In almost every situation, the default 32M apc.shm_size won't cut it, and in some cases, you'll need to push 128M or 256M before the server can run swiftly with a normal amount of fragmentation and purges.

Conclusion

It's always important to benchmark and profile everything. It's no use caching things in APC if you have a database query that takes 2 seconds to run, or an external web service call that takes 5! Once you've done things like tune database queries, check for obvious front-end performance flaws, and have your page load down to a couple seconds or less, start working on your caching strategy. APC isn't a good fit for everyone, but in this case, page generation times were cut at least 30% across the board and MySQL data throughput was cut by more than half!

A few important notes if you choose this route:

- Drush/CLI operations will effectively rebuild the APC cache for the command line every time they run, due to the way APC works (if

apc.enable_cliis turned on). However, it seems to have no effect on the separate APC cache for non-cli PHP. - Make SURE you monitor your APC memory usage, fragmentation, and purges. If you don't have about twice the required RAM allocated to APC, fragmentation and frequent purging might very well negate any significant performance benefit from using APC.

- Read through this Stack Overflow question for some more good notes on APC settings: Best APC settings to reduce page execution time.

Comments

Interesting article.

Since the Varnish module can enable Varnish to be used as Drupal backend cache, as well as page cache, it would be interesting to compare.

I have used APC on and off both for opcode cache and data cache (with APC module) and have mixed feelings about it. It can cause segmentation faults. Cached data is cleared when Apache is restarted (as it is at every log rotation on my Debian server). And if you run php as fcgid, there is a new APC cache per Apache process, and memory requirements can overrun your hardware and cause problems. But it is to be included in php 5.5, which is a vote of confidence from php developers.

When PHP-FPM is used, APC cache is shared between all FCGI instances.

great ! i've used APC's user cache once on a server with d6 where it was already installed by someone and I liked the results, too. And i was surprised that i havent heard about it being used more often.

I would suggest adding cache_menu to the list of candidates.

And I would think that even in a situation with horizontal scaling across several servers, some of the caches could be faster served from APC (and you might have more RAM available if MySQL is put elsewhere).

One problem with APC opcode memory - if you have several sites that dont share drupal core, your RAM consumption is going to grow with each site (or i dont know how to go around this problem).

As for the apache restart : it would probably help to include a call to the website to the logrotate job, so the caches would be prefilled ... but on the other hand, storing the data wont be so much overhead, will it ?

Yeah, the best use for APC is when you either use multisite or have one website on the server. If you have a shared host or a situation with a bunch of different codebases, APC will fill up quickly unless you explicitly exclude certain sites from the cache.

Nice article. A small note about APC. You should be very careful with your monitoring. APC does 0 fragmentation management and when your using it for both an opcode cache and a highly-trafficked user object cache, the likelihood of fragmentation goes up considerably. Worse, if it ever does get fragmented not only will your user cache be impacted, but your opcode caching will run slower or be incomplete.

Yeah, it's important to use munin or cacti to display graphs of fragmentation and purges over time. It's best to keep fragmentation under 50% (the lower the better), and to make sure that you always have a good cushion of available memory. If you don't monitor with a graphing utility, at least use the apc.php file to spot-check your server now and then.

Can you please explain how you place these cache_* tables into APC. I'm a little vague on what you did to move these DB entries into APC, rather then pulling from MySQL as is typical.

Follow the installation instructions included with the APC module. In my case, I edited settings.php and added in the proper configuration array variables to add APC as a cache backend and put certain cache bins into APC.

Doesn't MySQL have a query cache that should perform the same/similar task? I admin conservatively, so I would first try to increase the memory availability of this cache before switching to a higher-level cache.

Yes, but it's not as close to PHP in terms of access time as APC, and it's debatable how well the MySQL query caches work with InnoDB in general. I've had much better performance turning off the MySQL caches entirely and only using APC. However, having as large an InnoDB buffer pool as you can is a very good idea, because that will help with a lot of common queries that would be less helped by the query cache, and wouldn't be good candidates for APC or memcached.

It's worth trying File Cache module. Working with files doesn't mean always working with slow disk because of kernel buffer cache. And there's no moving once from e.g. memcached to kernel buffers and then from kernel buffers to PHP - it's (almost always) only from kernel buffer cache to PHP.

Disclaimer: I'm the maintainer of File Cache.

Definitely a viable option. Depending on your disk/RAM situation, and how Linux is configured, this might be a fast caching solution.